PROTECT DMAC Demonstrates State-of-the-art Data Management Framework as a Model for Multidisciplinary Research

Almost all of today’s automated systems generate some form of data either for diagnostic or analysis purposes. A data management framework and repository are commonly employed whenever a large amount of data is collected, providing a capability to deliver secure and reliable storage, data exploration and data sharing. Designing an effective data management framework plays a critical role in the smooth operation of an organization. Without the support of this key framework, the value of the data collected can be greatly diminished and sharing data may be impossible.

In a research setting, poor data quality can result in lost productivity and major obstacles when pursuing research aims, resulting in non-reproducible research results. Furthermore, security and reliability issues must be addressed, while offering efficient and easy access to the data, presenting a challenging balance to maintain in the framework.

PROTECT researchers recently presented our current PROTECT data management framework designed to efficiently manage the data of the NIEHS PROTECT Center. The Data Management and Analysis Core (DMAC) currently leverages a number of different software tools to facilitate ongoing research in the PROTECT Center, supporting data acquisition, security, cleaning, backup, sharing and visualization. We implement a number of customized tools to facilitate and streamline the workflow involved, leveraging state-of-the-art software libraries and the Python programming language.

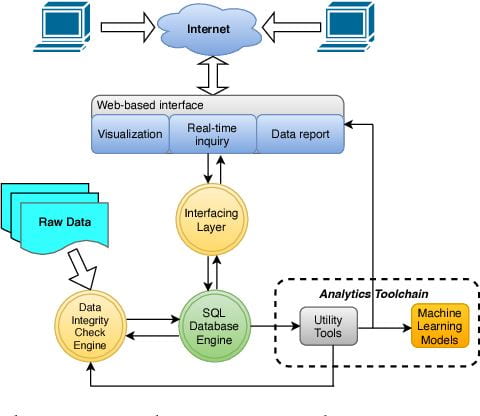

At the heart of our framework lies a SQL database engine (Figure 1), a relational database system (RDBMS). We leverage Microsoft SQLserver, which seamlessly interfaces to other elements in our framework. In our data processing pipeline we have developed a number Analytical Toolchains to support exploration using machine learning algorithms and models. For the application and web layers, PROTECT utilizes EarthSoft Equis Software Suite (Figure 1.), a commercial software application employed by a large number of environmental research organizations including the US Environmental Protection Agency (EPA).

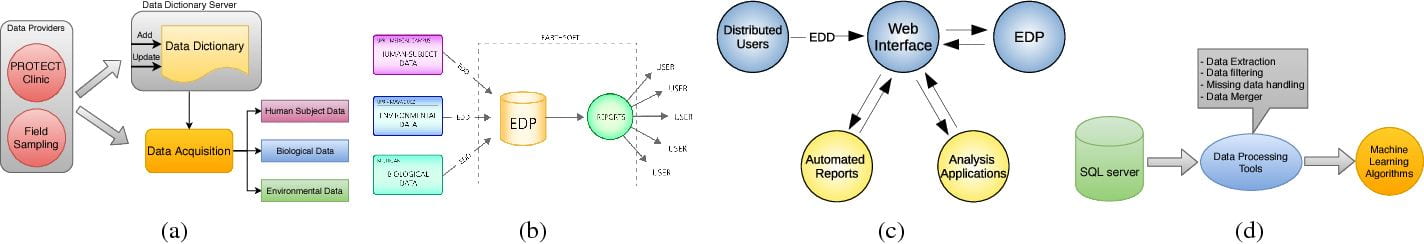

A common data dictionary is at the root of the PROTECT data management plan. Each site in the PROTECT cohort is collecting data from multiple sources (e.g., medical questionnaires and biometry, biological samples, environmental samples, GPS coordinates), providing a rich mix of data sources (Figure 2a). The data dictionaries enable researchers to connect and compare data from a variety of sources, based on a common database schema (Figure 2b). The in-house tools parse the dictionary files and generate application and SQL code to spawn a new repository. This automates the process of adding new data sets into the PROTECT framework.

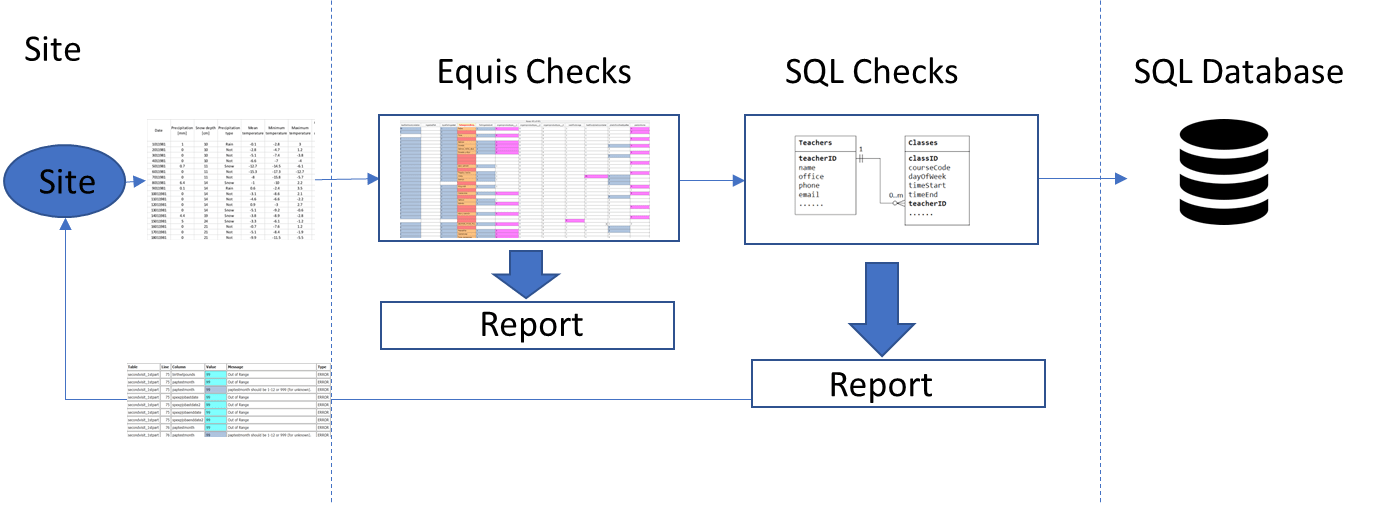

An example of the cleaning pipeline is outlined in Figure 3. Each site shares the data via secure cloud transmission to the DMAC, at which point the data is inspected by the application and database layers. If at any point a mismatch is found in a data import, a report is automatically generated and sent back to the source of the data. The result is a synchronized and clean data repository.

The PROTECT DMAC framework serves as a model for other researchers to follow when building or extending their own data management infrastructures. The DMAC will continue to share our model of what an ideal data management framework should look like with other multidisciplinary research centers who are faced with managing diverse types of data collected from multiple disconnected sources.

For questions, please contact our DMAC Leader, Dave Kaeli and/or our Database Admin, Zlatan Feric.

Publication Referenced

An Efficient Data Management Framework for Puerto Rico Testsite for Exploring Contamination Threats (PROTECT)

S Dong, Z Feric, L Yu, D Kaeli, J Meeker, IY Padilla, J Cordero, CV Vega, …

2018 IEEE International Conference on Big Data (Big Data), 5316-5318